IoT Edge-Heavy Computing for Video with Soracom

The Soracom team has been having a great time at CeBIT 2017, and one of the exhibits getting a lot of attention at our booth this week is a joint demonstration developed in partnership with Preferred Networks, Inc. for IoT edge-heavy computing for video.

Preferred Networks has developed an extraordinary reputation for deep learning technology focused on IoT. Its Deep Intelligence In Motion Platform (DiMo) powers deep learning solutions applied by leaders in transportation, manufacturing, and biotech, including Toyota Motor Corporation and Japan’s National Cancer Research Center.

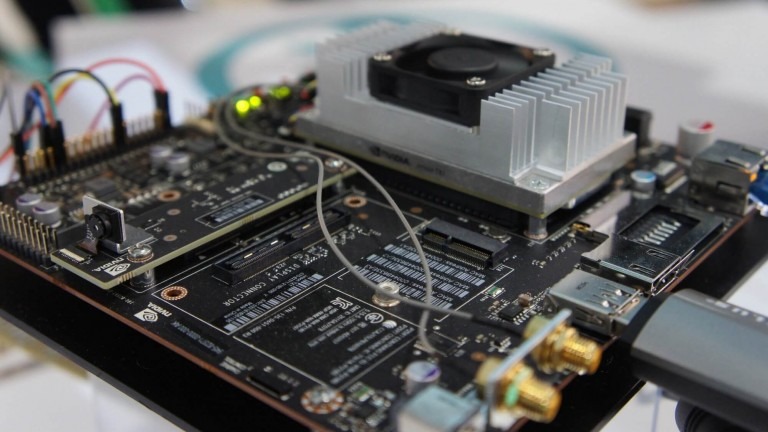

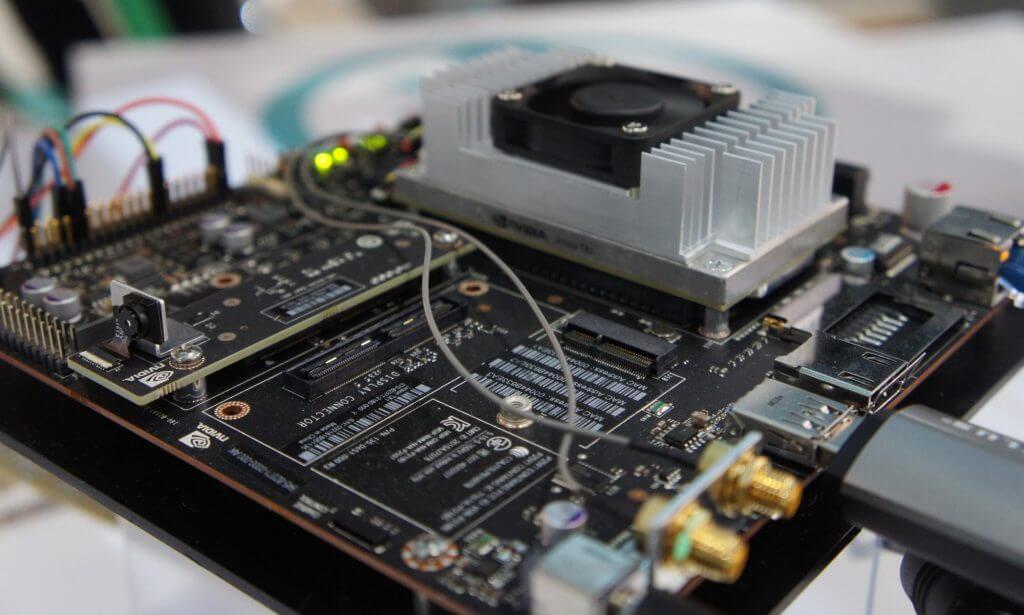

DiMo running on Nvidia Jetson TX1

This demo shows how deep learning technologies and smart connectivity can work together to empower remote devices, address challenges related to bandwidth and privacy, and deliver effective real-time analysis at the edge.

Powering an IoT Edge

Advances in wireless connectivity make it easier than ever to keep remote devices connected affordably and securely, providing a steady stream of data for analysis and visualization in the cloud.

However, even with help from advanced services like Soracom Beam and Soracom Funnel, data-intensive payloads like audio and video can place a heavy burden on available bandwidth and strain the resources of low-power, low-memory devices.

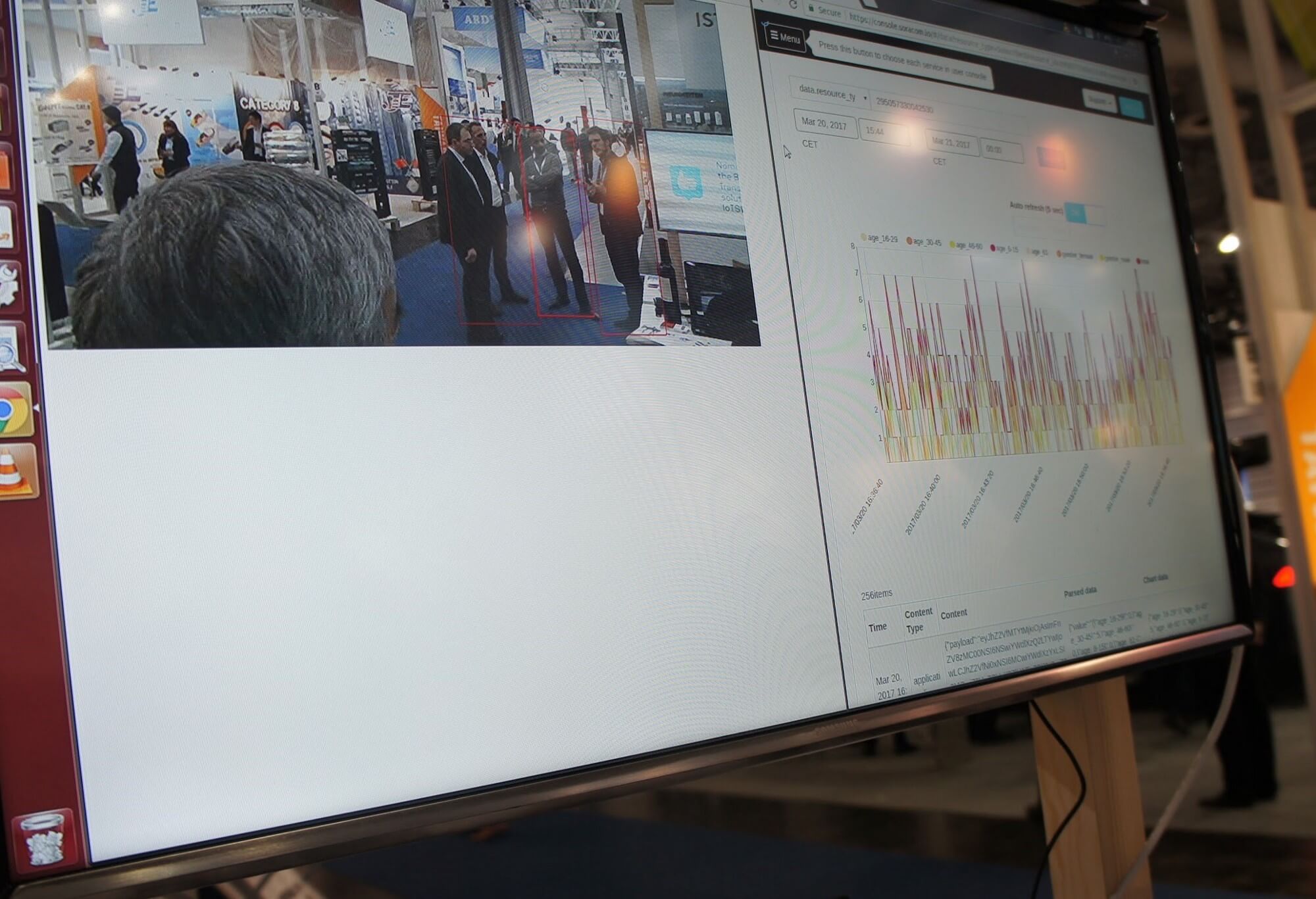

In this demonstration, the Preferred Networks DiMo platform is running on Nvidia’s high-performance Jetson TX1 GPU, powering a camera capturing video from the show floor in real-time. Before transmitting, the video data is analyzed and summarized by DiMo, running directly on the device. Summarized data is then sent to the cloud using Soracom Air and generalized show-floor demographics are then visualized in real time with Soracom Harvest.

Real-time visualization with Soracom HarvestF

PFN calls this solution Edge Heavy Computing, and it offers significant advantages over sending raw video or audio directly from devices to the cloud.

- Analysis of high-quality, high-resolution video can be conducted without concern for data size

- Full analysis is possible with only small amounts of data transmitted, reducing bandwidth and power demands

- Images can be analyzed at full resolution at the edge rather than reduced in quality before transmission for future analysis

- Images can be discarded on the camera side after analysis to maintain privacy since they are not accumulated in the cloud

The Soracom team has been honored to have the opportunity to participate in this demo, and the response from attendees at CeBIT has been incredible. If you’re in Hanover, there’s still time to drop by and see for yourself at Hall 12, Stand B37.

If not, you can read the full press release, and stay tuned for more to come.

………………

Got a question for Soracom? Whether you’re an existing customer, interested in learning more about our product and services, or want to learn about our Partner program – we’d love to hear from you